How AI Voice Bots Qualify Leads Automatically (and Where They Fail)

Table of Contents

Lead qualification is one of the most repetitive activities in any B2B sales operation. A prospect raises their hand — fills out a form, calls a number, replies to an email — and someone has to spend 5 to 15 minutes on the phone asking the same questions to figure out whether this person is worth putting in front of a closer. Multiplied across a month of inbound traffic, qualification absorbs a substantial fraction of sales team capacity, and most of it is going to prospects who never were going to buy.

AI voice bots are unusually well suited to this specific job. Qualification is structured, the questions are stable over time, and the outcome is discrete — qualified, disqualified, or somewhere in between. Unlike closing (which is relationship-driven and nuanced), qualification is almost entirely procedural. If you define the criteria and the questions, the AI can run the procedure at volume while the humans focus on the conversations that require judgment.

This article walks through how AI voice bots actually qualify leads in practice, the design patterns that work, the ones that do not, and where the whole approach falls apart.

What qualification is trying to accomplish

Before any technology discussion, the underlying goal. Qualification is a filter. Its job is to separate two groups: prospects who are worth a sales conversation, and prospects who are not. Everything about qualification design should be optimized for getting that separation right.

There are three things qualification needs to figure out:

- Fit: is this prospect in a segment the product actually serves?

- Intent: is this prospect in a buying process, or just researching?

- Authority/budget: does this prospect have the ability to actually buy?

The classic BANT framework (Budget, Authority, Need, Timeline) formalizes this, and every lead qualification framework is some variation of the same four criteria. What matters is not which framework you pick but whether the questions you ask actually surface the answers to those four things.

What the AI voice bot actually does

In production, an AI voice bot running lead qualification is doing a small number of specific things on every call:

- Opening warmly and establishing context. “Hi, this is Alex with Acme Corp. I'm calling about the inquiry you submitted yesterday about our enterprise plan. Do you have a few minutes?” The tone is warm, the purpose is explicit, the escape hatch is offered.

- Asking the fit questions. Usually in the first 60 seconds. Team size, industry, current solution, specific pain point. These are the fast filters — if the answer is wrong on any of them, the rest of the call is not going to qualify the lead anyway.

- Asking the intent questions. “What prompted you to look at this right now? Are you evaluating other options? Do you have a timeline in mind for making a decision?” The signal here is not just what the prospect says — it is how specific and immediate the answers are. A prospect who says “we're planning to evaluate options next quarter” is less qualified than one who says “we need to have a solution in place by the end of this month.”

- Asking the authority and budget questions. This is the hardest part to do without sounding presumptuous. Good AI bots phrase it obliquely: “When you implement something like this, who else would typically be involved in the decision?” rather than “are you the decision-maker?” The oblique version usually surfaces the same information without feeling like an interview.

- Booking the next step (if qualified) or closing politely (if not). For qualified leads, the AI schedules a meeting with a closer and confirms the calendar invite before ending the call. For disqualified leads, the AI ends the call with a brief acknowledgment (“Based on what you've shared, it sounds like our enterprise tier isn't the right fit right now — but we'd love to stay in touch if things change”) and marks the disposition in the CRM.

The whole conversation runs 3 to 5 minutes on average. A human doing the same work takes 10 to 15 minutes because of the schedule-finding ceremony, the small talk, and the distractions that come with being a human. The AI is just faster on the mechanical parts.

The scoring patterns that work

There are three ways to turn the raw qualification answers into a score or a pass/fail decision. Each has its place.

Threshold-based qualification. The simplest pattern. Define a set of must-haves (company size above 50, industry in target list, buying within 90 days) and qualify any lead that meets all of them. Everyone else is disqualified. This works well when your target market is well-defined and the criteria are non-negotiable. It produces low false positives but can miss edge cases that should have qualified.

Scored qualification. Assign weights to each qualification factor and produce a numerical score. Lead passes if the total exceeds a threshold. More flexible than the all-or-nothing approach, and it lets you capture the fact that some factors matter more than others. The trade-off is complexity — you need to set the weights, calibrate the threshold, and revisit both as the market changes. Most teams overthink this and end up with scoring models that are worse than a simple threshold.

Segmented qualification. Route leads into different tiers based on the qualification result. Hot leads (ready to buy, right size, right industry) go to a senior closer for a same-day meeting. Warm leads (right fit but longer timeline) go to a nurture track with a follow-up in 30 days. Cold leads (wrong fit) are politely declined and logged. This is usually the right pattern in practice because it matches the actual sales process — different leads need different treatment, not just pass/fail.

The AI voice bot can run any of these three patterns. What matters is choosing one deliberately and instrumenting it so you can tell whether it is working. A surprising number of teams deploy qualification without a clear definition of what qualified means, and then wonder why the sales team complains about lead quality.

Where automated qualification fails

Four failure modes show up repeatedly. If you are thinking about deploying AI qualification, knowing these in advance saves a lot of debugging.

Bad lists produce bad qualification results, fast. No qualification system can make a list better than it is. If the list is stale, poorly sourced, or badly targeted, the AI will diligently qualify every lead as “not a fit” and the campaign will produce zero meetings. The temptation is to loosen the criteria; the actual fix is to improve the list.

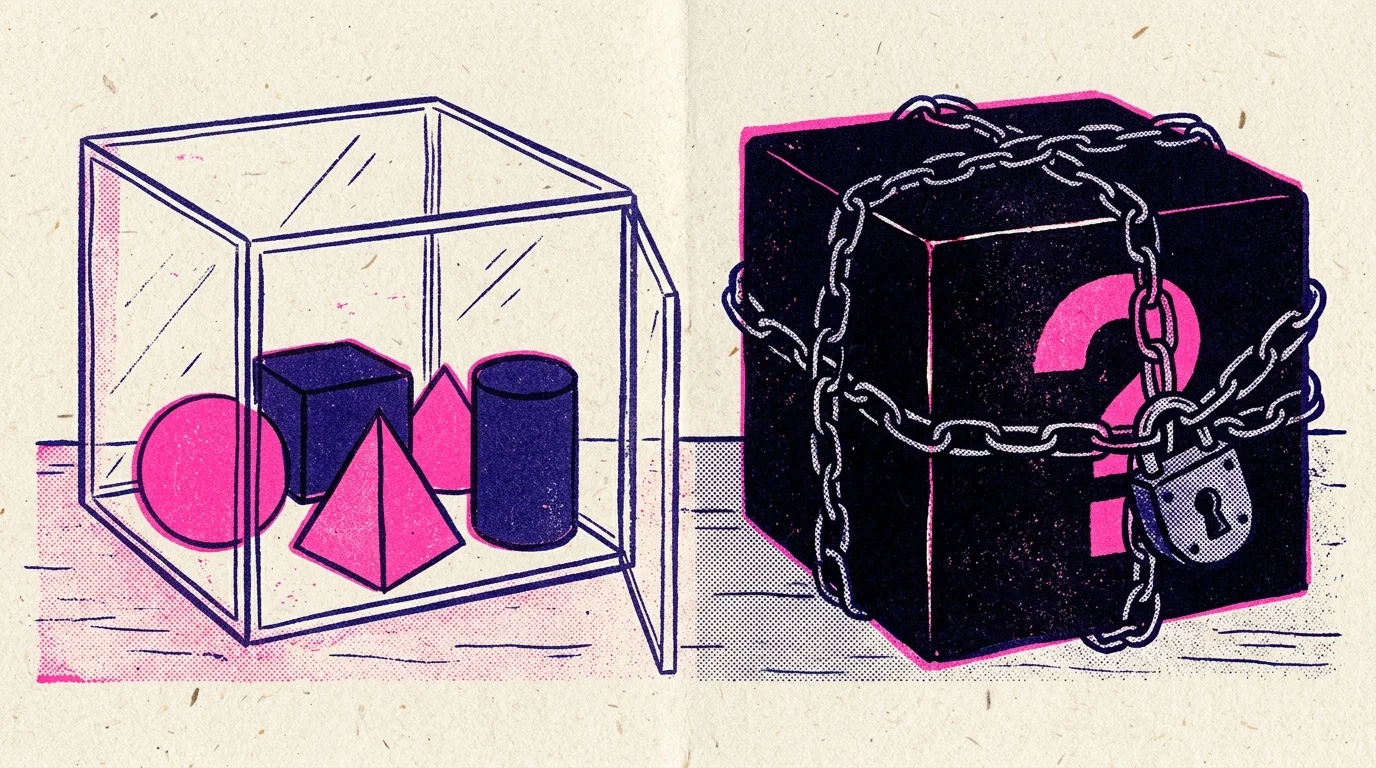

Scripts that are too rigid produce robotic-sounding calls. Early AI voice bots with tight scripts failed to sound human, and prospects disengaged within 30 seconds. Modern systems using backchanneling and natural language understanding avoid this, but only if the prompt is written conversationally rather than as a literal script. “Ask the prospect about their team size” is a prompt. “Say the following: how many people are on your team?” is a script. The prompt works; the script does not.

Criteria that are too narrow disqualify good leads. Any qualification filter produces false negatives — leads that were actually qualified but got filtered out because one of the answers was slightly off. If your filter is too narrow, the false-negative rate becomes the dominant cost of the system. The fix is to audit the disqualified leads periodically and see how many of them actually converted when they went through a different channel. If that number is more than 10 to 15 percent, your criteria are too narrow.

Failure to handle the emotional edge cases. The failure mode that catches most deployments by surprise: a prospect is calling because they are frustrated, angry, in the middle of a crisis, or otherwise not in a normal qualification mindset. A well-tuned AI bot recognizes this and transfers to a human immediately rather than trying to run the qualification script. A badly tuned one plows ahead with the questions, the prospect escalates, and the company ends up with a complaint. Detecting emotional state and escalating is one of the places where spending effort on the prompt pays off.

The system prompt pattern

A working AI qualification prompt has three parts, in this order:

- Context: who the AI is, who the caller is, why the call is happening, what the goal is. “You are Alex, an inbound sales rep at Acme. The caller submitted an inquiry yesterday about our enterprise plan. Your goal is to qualify them for a 30-minute demo with one of our senior reps.”

- Qualification criteria: the specific things that make a lead qualified. Be explicit. “Qualified means: 50+ employees, US or Canada, currently using a competitor or a legacy system, planning to make a decision in the next 90 days. If any of these four conditions are not met, the lead is not qualified for the senior rep track.”

- Conversation guide: the questions to ask and the flow. This is not a script — it is guidance. “Start by confirming the caller's name and role. Ask about team size, current tool, and what prompted them to look at alternatives. Follow natural conversation flow; do not interrogate.”

- Outcome paths: what to do based on the qualification result. “If qualified, book a 30-minute demo with the senior rep calendar via the schedule_meeting tool. If not qualified, thank them politely and end the call without pressuring them. If the caller is frustrated or describes an urgent issue, transfer to the on-duty CSM.”

This structure is short enough to fit in a single system prompt, specific enough to produce consistent behavior across calls, and flexible enough that the AI can handle conversation variations naturally. Most failed qualification prompts are either too vague (just “qualify the caller” with no criteria) or too prescriptive (a literal script that breaks when the caller does not follow it).

The role of the human in an AI-qualified pipeline

The point of AI qualification is not to eliminate humans from the process. It is to remove them from the part of the process that does not benefit from human judgment. Once a lead is qualified, the human closer does what humans do well: build rapport, handle nuanced objections, negotiate terms, and close. The AI has done the triage.

The pattern that works best in practice: the AI qualifies, then hands off to a human with a complete warm transfer that includes all the qualification data. The human sees the team size, the current tool, the timeline, the pain point, and anything else the AI captured. They do not have to repeat the qualification questions — they can open the meeting at the point where the AI left off, which compresses the whole cycle and dramatically improves the human's hit rate.

The economics of this are what make automated qualification attractive. The AI takes 3 minutes per lead at $0.20 of per-minute cost. A human SDR takes 15 minutes at a fully loaded hourly rate. Running 1,000 inbound leads through AI qualification costs under $200 and produces 200 to 300 qualified meetings. Running the same 1,000 through human SDRs costs $3,000 to $5,000 and produces the same number of qualified meetings, because the qualification work is not where the human adds value.

Further reading

- AI Cold Calling: How AI Phone Agents Are Replacing Manual Dialers — the outbound counterpart to inbound qualification.

- AI Outbound Calls: Build Automated Calling Campaigns — the end-to-end campaign patterns.

- 8 KPIs Every AI Outbound Calling Campaign Should Track — how to measure whether qualification is working.

- Warm Transfer — BubblyPhone Agents Glossary — the handoff pattern that makes AI qualification pay off for the human closer.

Ready to deploy AI qualification on your inbound pipeline? Sign up for BubblyPhone Agents, write a qualification prompt, wire it to your CRM through tool calls, and let the AI handle the repetitive part of the funnel.

Ready to build your AI phone agent?

Connect your own AI to real phone calls. Get started in minutes.

Related Articles

7 min

7 minLocal vs Toll-Free Numbers for AI Phone Agents: Which to Use When

The real difference between local and toll-free phone numbers for AI phone agents. Answer rate data, cost comparison, and when each type actually makes sense.

6 min

6 minKore.ai Alternative: When to Pick Something Lighter

Kore.ai serves large enterprises with heavy compliance needs. For most teams, a lighter alternative is a better fit. Here is how to decide.

5 min

5 minAir.ai Alternative: Developer-First Voice AI Without the Pricing Mystery

Looking for an Air.ai alternative with transparent pricing and a developer-first workflow? A practical comparison of what each platform offers and who fits where.

8 min

8 minDTMF vs Voice Recognition: When to Use Each for AI Phone Agents

When to use DTMF keypad input versus voice recognition in AI phone agents. A practical guide to the trade-offs, the hybrid pattern, and the cases where each wins.