DTMF vs Voice Recognition: When to Use Each for AI Phone Agents

Table of Contents

DTMF stands for Dual-Tone Multi-Frequency — the tones your phone produces when you press a key on the keypad. It is the oldest input method in telephony, dating to the 1960s, and it is still the backbone of most phone-based customer service menus today. Press 1 for sales, 2 for support, 3 for billing. DTMF works, it is reliable, it is universally supported, and nobody enjoys it.

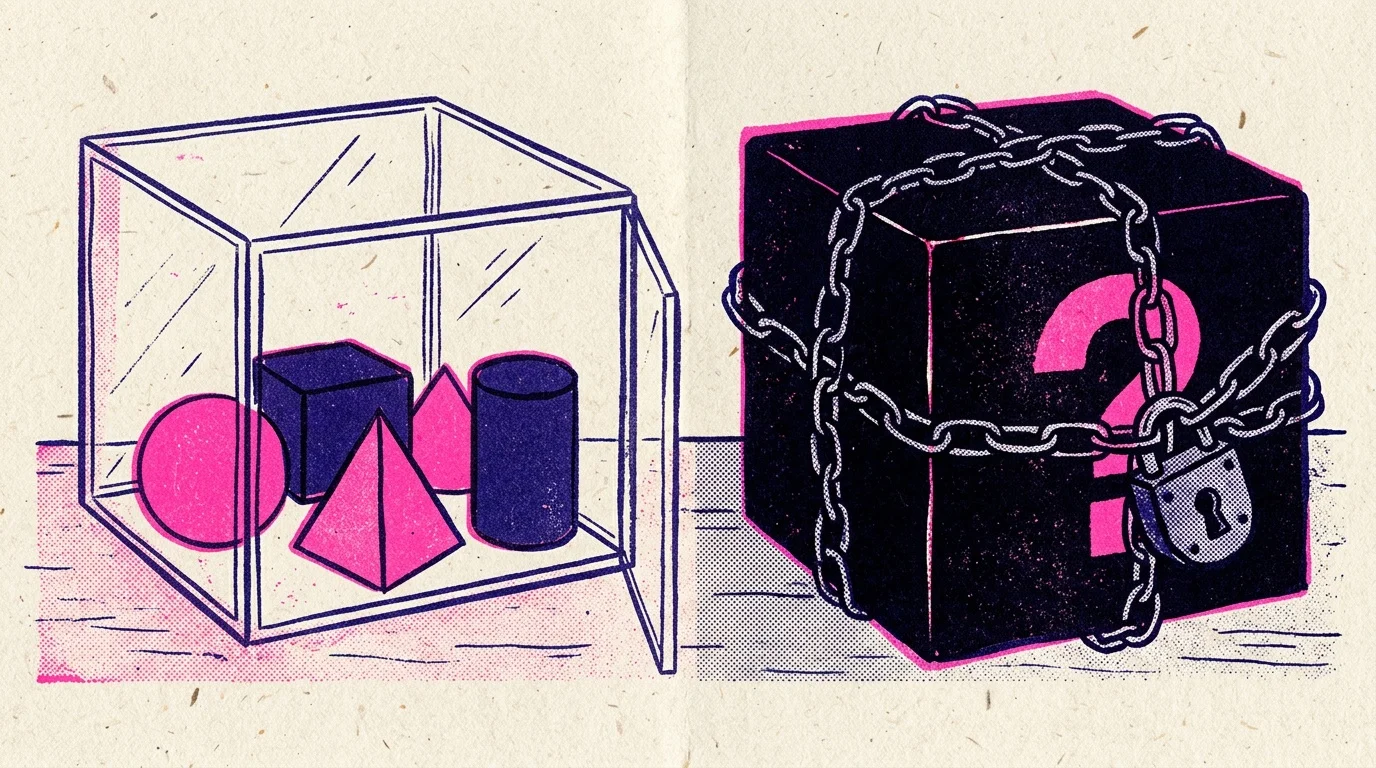

Voice recognition is the modern alternative: the caller speaks naturally and the system understands them. In the era of speech-to-speech models, voice recognition has finally reached a quality level where it can replace DTMF for most interactions. But there are specific cases where DTMF is still the better choice, and knowing when to use which is one of the practical design decisions in any AI phone agent deployment.

This article walks through the trade-offs, the specific cases where each input method wins, and the hybrid pattern that most production systems end up using.

Why DTMF has stuck around

There are four reasons DTMF is still everywhere in 2026 even though voice recognition is viable.

It works on every phone. Every telephone made in the last 60 years can produce DTMF tones. Every telephony system in the world can recognize them. There is no language barrier, no accent variation, no ambient noise problem. If the caller can push a button, the system can receive the input. This is an unglamorous but load-bearing property.

It is perfectly accurate. A DTMF tone is an unambiguous signal. The caller pressed 3; the system receives a 3. Voice recognition, even with state-of-the-art models, has a nonzero error rate. For inputs where the cost of a mistake is high — entering an account number, confirming an identity, navigating to the right department — the error-free property of DTMF matters.

It does not reveal sensitive information. If a caller is in a public place and needs to enter a PIN or a credit card number, DTMF is private in a way that speaking the number is not. Nobody at the coffee shop hears the caller's keypresses. For PCI-compliant card capture specifically, DTMF is the standard pattern because it keeps the sensitive data out of both the caller's audible environment and the call's audio recording.

It is fast for power users. A frequent caller who knows the menu can hit 4-2-1 in two seconds to get to the right place. A voice-driven version of the same interaction takes six to ten seconds because the caller has to wait for prompts and speak full phrases. For repeat callers navigating familiar menus, DTMF wins on time.

Why voice recognition won for most interactions

Those advantages kept DTMF alive, but they did not prevent voice recognition from taking over the majority of new deployments for three specific reasons.

Callers hate menu trees. The single most universally disliked feature of phone-based customer service is the nested menu. “Press 1 for English, 2 for billing, 3 for...” By the time the caller has navigated three levels down, most of them are frustrated enough that the rest of the interaction is colored by it. Voice recognition replaces the tree with a natural question: “how can I help you?” and the caller answers in their own words. The experience is dramatically better and the resolution rate often goes up because the caller did not get lost in the tree.

Menu trees do not handle novel situations. Every DTMF menu has a fixed set of options. If the caller's situation does not fit any of them, they end up at the operator, or they hang up. Voice recognition can handle variations. A caller who says “I need to talk to someone about my bill from last month that looks wrong” gets routed correctly even if the DTMF menu has no option for “bill from last month that looks wrong.”

LLMs make voice recognition useful in ways that were not possible before. The old voice recognition systems (the ones that predate large language models) required narrow intent detection — you had to predict what the caller might say and train the system on those variations. LLM-backed voice agents generalize: they can understand novel phrasings, infer intent from incomplete input, and ask clarifying questions when they need more information. This is a qualitative shift from what was possible five years ago, and it is the reason DTMF-first deployments are now the exception rather than the rule.

The cases where DTMF still wins

There are four specific scenarios where DTMF is still the better choice, regardless of how good voice recognition gets.

Secure number entry (PCI card capture, SSN, PIN). This is the strongest case for DTMF in 2026. When a caller needs to enter sensitive numeric data, DTMF is the compliant and private way to do it. PCI-DSS auditors generally prefer DTMF card capture over voice-based capture for exactly the reasons above — it keeps the data out of the audio and out of any ambient listeners. Most AI phone agents that need to collect payment information do so by handing off to a DTMF capture layer temporarily, then resuming the voice conversation.

Authenticated menus where accuracy is critical. If the caller is navigating a menu where getting to the wrong place has real consequences (financial account management, medical records, legal matters), DTMF is more reliable than voice. You do not want a banking customer who said “transfer” to be routed to “transfer funds” when they meant “transfer to agent.” A single keypress is unambiguous in a way that spoken words are not.

Accessible interaction for speech-impaired users. Some callers cannot speak clearly, cannot speak the supported language, or are in environments where speech is not possible. DTMF works for all of these cases. Any well-designed AI phone system in 2026 offers a DTMF fallback for exactly this reason — it is an accessibility requirement under ADA Title III in the US and similar rules elsewhere.

Repeat-caller shortcuts. For systems with many repeat callers, providing a DTMF shortcut (“if you know your party's extension, press it now”) is a power-user feature that saves time. Voice recognition still has to go through the natural language intake, which is slower than two keypresses.

The hybrid pattern

Most production AI phone agents in 2026 use a hybrid pattern: voice-first for the main conversation, DTMF for specific moments within it. The pattern looks like this:

- Voice opens the call. “Thanks for calling Acme, how can I help you today?” The caller describes their situation naturally.

- Voice handles the conversation. The AI agent understands the caller, runs qualification, collects information, handles the conversation end-to-end using the same natural language exchange that makes modern voice agents feel human.

- DTMF handles the secure moments. When the caller needs to enter payment information, a social security number, an account PIN, or any other sensitive numeric data, the AI agent says “I'm going to send you to our secure payment capture — please enter your card number followed by the pound sign” and hands off to a DTMF capture layer. The card number enters through DTMF, the AI agent receives a success signal (not the card number itself), and the voice conversation resumes.

- DTMF handles the fallback. Throughout the call, the AI agent offers “press 0 at any time to speak to a person” as an escape hatch. Callers who are struggling with the voice interaction, who have accessibility needs, or who simply prefer human help have a reliable way to opt out.

This pattern gives you the best of both worlds: the natural conversational quality of voice recognition for 95% of the interaction, and the reliability and compliance of DTMF for the specific moments where it matters.

Implementation notes for AI phone agents

For an AI phone agent built on BubblyPhone Agents or a similar platform, the DTMF handling is usually done through a combination of platform features and tool calls. The voice portion runs through the normal streaming-mode pipeline. At the moment when sensitive input is needed, the agent invokes a tool that hands off to a DTMF-capable payment processor or secure capture service. The agent's role during the DTMF interaction is to wait and confirm success, not to handle the raw data.

A few practical notes:

- Make the DTMF fallback obvious. If you are offering “press 0 for an operator,” say it early and say it clearly. Hiding the fallback behind a long menu defeats its purpose.

- Do not use DTMF for navigation in voice-first agents. The old IVR tree pattern (“press 1 for sales, 2 for support”) should not exist in an AI voice agent — that is what the natural language opening is for. Reserve DTMF for specific secure or accessibility use cases.

- Test DTMF handoff explicitly. The handoff between voice and DTMF is a place where integration bugs hide. A caller who is told “enter your card number now” and whose keystrokes do not register has a very bad experience. Make sure the test suite covers this path.

What about touch-tone fraud?

A specific concern in financial services: DTMF can be abused by fraudsters in two ways. One, if the DTMF tones leak into a call recording or transcript, the card numbers become extractable. Two, DTMF can be generated by software, which means a bot calling in can navigate menus the same way a human would. Both of these are mitigated by using purpose-built secure DTMF capture services that strip the tones from the audio stream before recording, and by adding anti-bot detection (unusual timing patterns, unusual source numbers) at the platform level. If your deployment touches payment data, work with your PCI compliance team on the specific configuration — the defaults on most platforms are sensible, but it is worth verifying.

Further reading

- IVR — BubblyPhone Agents Glossary — the menu-tree ancestor of DTMF-heavy systems.

- Call Flow — BubblyPhone Agents Glossary — how voice-first call flows replaced the old DTMF menu trees.

- Is It Legal to Record Phone Calls? — relevant for DTMF capture of sensitive data, which interacts with recording rules.

- Voice App Development: A Complete Guide — the architecture patterns for building hybrid voice-plus-DTMF deployments.

Ready to build an AI phone agent that uses the right input method for each moment in the call? Sign up for BubblyPhone Agents and configure your voice agent with DTMF fallback for accessibility and secure capture.

Ready to build your AI phone agent?

Connect your own AI to real phone calls. Get started in minutes.

Related Articles

7 min

7 minLocal vs Toll-Free Numbers for AI Phone Agents: Which to Use When

The real difference between local and toll-free phone numbers for AI phone agents. Answer rate data, cost comparison, and when each type actually makes sense.

6 min

6 minKore.ai Alternative: When to Pick Something Lighter

Kore.ai serves large enterprises with heavy compliance needs. For most teams, a lighter alternative is a better fit. Here is how to decide.

5 min

5 minAir.ai Alternative: Developer-First Voice AI Without the Pricing Mystery

Looking for an Air.ai alternative with transparent pricing and a developer-first workflow? A practical comparison of what each platform offers and who fits where.

7 min

7 minRetell AI Alternative: A Developer's Honest Comparison

Looking for a Retell AI alternative? An honest comparison of pricing, developer experience, features, and the specific cases where each platform wins.